Which one is more toxic? Findings from Jigsaw Rate Severity of Toxic Comments

Abstract

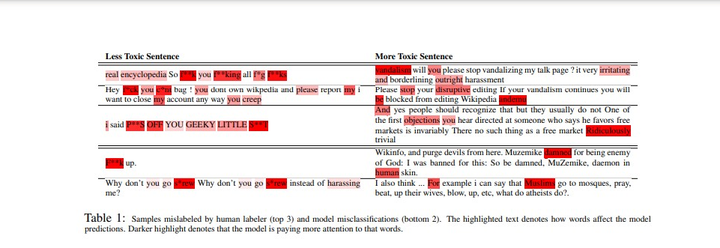

The proliferation of online hate speech has necessitated the creation of algorithms which can detect toxicity. Most of the past research focuses on this detection as a classification task, but assigning an absolute toxicity label is often tricky. Hence, few of the past works transform the same task into a regression. This paper shows the comparative evaluation of different transformers and traditional machine learning models on a recently released toxicity severity measurement dataset by Jigsaw. We further demonstrate the issues with the model predictions using explainability analysis.

Type

Publication

Threat, Aggression and Cyberbullying 2022

Main contributions

Coming soon

Limitations

Coming soon

Future Directions

Coming soon